AI for the greater good

What’s possible with AI right now?

impact programs that we’ll speed up or eliminate with AI tools that:

administrators extract accurate

information from PDFs.

The future of social impact is vitally human

Grantees want to spend more time passionately advocating for and actively addressing their cause. This starts with reducing the amount of time they spend on rote, basic questions for each and every application.

burden?

for time. The biggest benefit of AI in

organizations that really can't afford the

staffing levels of a corporate [organization] is

in those basic, menial tasks."

Reviewers want to remove human error without removing human agency. This starts with smarter automated workflows and accurate document parsing.

Grant administrators want to make sure funds go to where they’re needed most. This starts with the strictest security measures and accessibility tools like translations and helpdesk documentation.

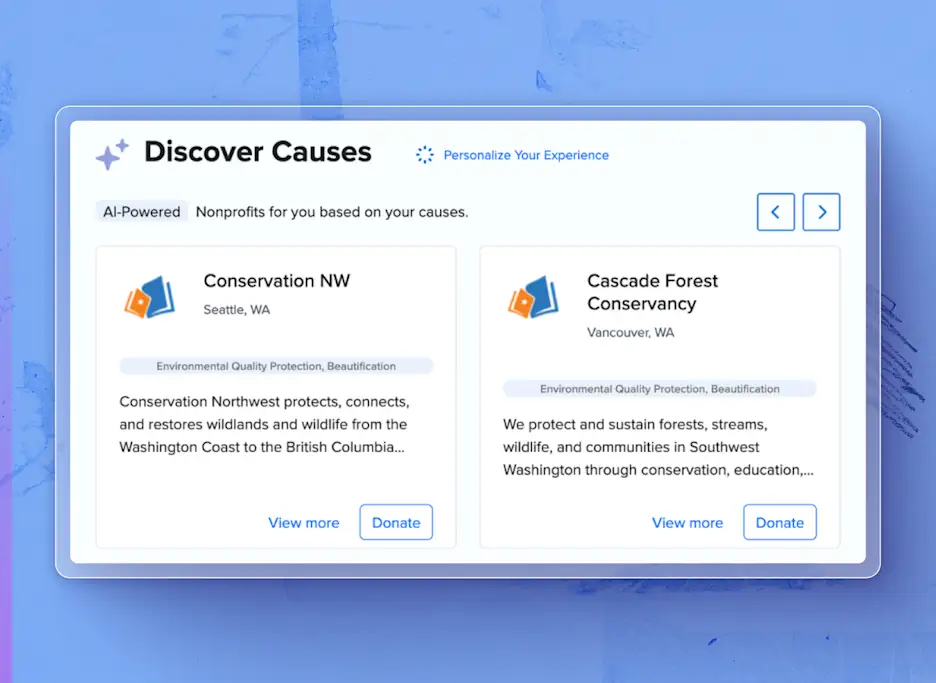

CSR program managers and their employees want to do good without logistics getting in the way. This starts with connecting the dots between every stakeholder through automations.

Ethical AI starts with firm principles

Empowering

Accountable

Transparent

Equitable

Private & Secure

Learn More About Our AI Principles

AI tools built with you, for you

Partnering with the best to create the best

Submittable is proud to be a Microsoft Tech for Social Impact prioritized

partner. We’re also using Azure OpenAI services to create industry-

leading AI tools built upon the most advanced infrastructure.

Learn with us

affects the social impact sector and the world at large.